Try Onsa

Try OnsaTL;DR: Build a real-time AI sales analytics pipeline that tracks lead quality, conversion rates, and pipeline health automatically. Uses AI to surface insights that manual dashboards miss — like which lead sources actually convert vs. which just look busy.

“Who’s your best salesperson?”

Ask any sales leader. They’ll name whoever brought in the most revenue last quarter. Sara Chen - $1.2M in closed deals, highest win rate on the team. Give her the bonus, right?

Not so fast.

When we applied a single formula to the same dataset, Mike Johnson came out on top. His deals were smaller, but he closed them faster. His velocity - revenue generated per unit of time - was higher than Sara’s.

They’re both great salespeople. But they’re great at different things. And the formula that revealed this distinction is the same one that handles about 70% of the sales analytics questions you’ll ever need to ask.

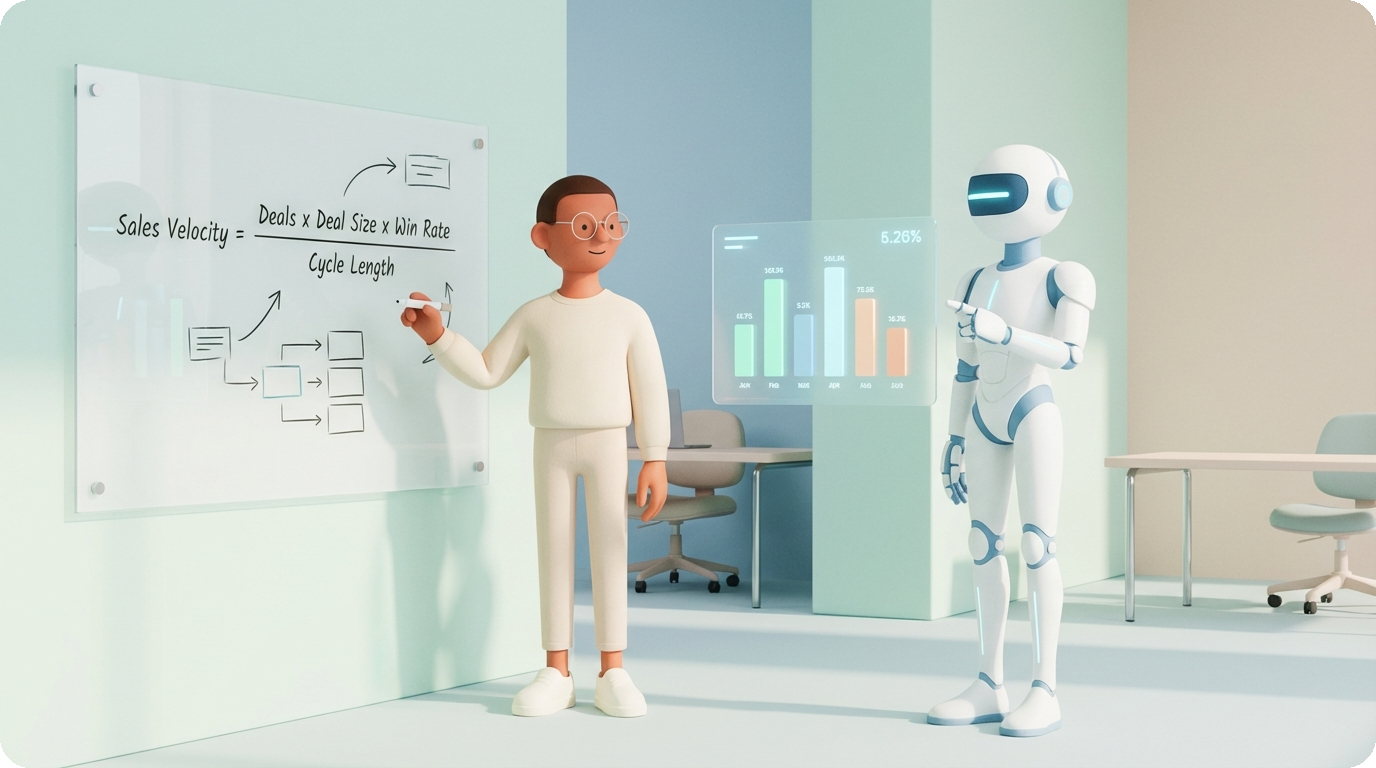

Here it is:

Sales Velocity = (Number of Deals x Average Deal Size x Win Rate) / Sales Cycle Length

That’s it. One formula. Four variables.

The idea comes from Andy Grove’s High Output Management, where he argues that any production process can be understood through three lenses:

Conversion - what percentage of efforts actually produce results (your Win Rate)

Speed - how quickly the process moves (your Sales Cycle Length)

Volume - how many resources go in and come out (your Number of Deals x Deal Size)

Here’s why velocity matters more than raw revenue. Take our TechCorp example with 1,010 deals:

Sara Chen closes big. Enterprise deals, $80K+ average. But her sales cycles run 90+ days. She’s optimized for size.

Mike Johnson closes fast. Mid-market deals, $45K average. But his cycles are under 60 days. He’s optimized for speed.

When you multiply it all out and divide by time, Mike generates more pipeline value per day. If you’re trying to decide who to clone - who to build your process around - velocity tells you something revenue alone can’t.

The important thing isn’t that Mike is “better.” It’s that without velocity, you’d never even ask the question. You’d just look at the revenue leaderboard and move on. (This is the same principle behind building a strong ICP - the obvious answer isn’t always the right one.)

And this is the first lesson about using AI for analytics: by default, AI reaches for the most obvious metric. It’ll rank by revenue because that’s what most people mean by “best.” Your job is to give it the right formula. AI executes brilliantly - but you need to define what “good” actually means.

Once you start asking AI to analyze your sales data, you’ll get answers fast. The danger is that those answers will be precise and wrong. Here are three traps we’ve seen trip up sales teams repeatedly.

“Reps who make more calls close more deals.” Sounds reasonable. And when you run the correlation, it checks out - won deals have more activities than lost deals.

But think about it. Which deals naturally accumulate more activities? The ones that progress. A deal where you got the first call, booked a demo, sent a proposal, and negotiated terms has four activities by definition. A deal that died after the first cold call has one.

The outcome determines the activity count, not the other way around. It’s a circular dependency.

This matters because the tempting conclusion - “force reps to do more activities” - doesn’t fix the root cause. If all those extra activities are voicemails and follow-up emails to dead leads, you’ve just created busywork with great-looking metrics.

Instead: look at the type and outcome of activities. Did the demo happen? Did the proposal get a response? That’s where the signal is.

Before blaming your bottom performers, check what they’re working with.

If your top rep gets all the inbound hot leads while your junior reps get cold outbound lists, the win rate gap isn’t about skill - it’s about input quality. Money goes to money.

In the TechCorp dataset, we checked the distribution of Hot, Warm, and Cold leads across all reps. The result: no systematic bias. Most reps had a similar mix. So the performance gap was real - it came from how they worked the leads, not which leads they got.

But I’ve seen teams where the top performer was “the best” simply because the round-robin algorithm gave them first pick of every inbound lead. Fix the distribution, and suddenly your “B players” look a lot more capable.

Always check before you blame.

These are deals that are technically “open” in your CRM but functionally dead. Created more than 120 days ago. No meaningful activity in the last 30 days. Just sitting there, inflating your pipeline numbers.

In TechCorp’s data: roughly 18 zombie deals sitting on approximately $820K in “pipeline value.” That’s $820K your forecast counts as possible revenue that will almost certainly never close.

Here’s the subtle part. When we asked AI to find zombie deals, it used the LastActivity column from the main table. Quick and easy. But that column is a summary field - it might not reflect actual activity dates from the detailed activities table. The AI made a reasonable assumption that happened to be wrong.

This is how analytics errors creep in. AI doesn’t tell you it’s making assumptions. It just picks the most accessible data and runs with it.

The actual process of going from raw CRM data to actionable insights follows three steps. We’ve run this workflow live with dozens of teams, and it consistently takes about 15 minutes once you know the pattern.

Before asking any business questions, let AI explore the dataset. This means: column types, missing values, duplicate records, date ranges, and distribution of key fields.

Why this matters: if 40% of your Competitor column is blank, any competitive analysis will be based on partial data. If your date formats are inconsistent, cycle time calculations will be wrong. You need to know what you’re working with.

Ask: “Profile this dataset. Show me column types, missing value rates, date ranges, and the distribution of key categorical fields like Stage, Product, and LeadSource.”

This is where it gets powerful. Instead of writing SQL queries or building pivot tables, you describe what you want to know:

• “Calculate sales velocity by rep. Show top 5 and bottom 5.”

• “Find deals stuck in Negotiation for more than 60 days. What’s their total value?”

• “Compare win rates by lead source. Which source has the best ROI profile?”

AI calculates the metrics, generates tables, and often spots patterns you didn’t ask about. The key is to start with your master formula (velocity) and then drill into the components that seem off.

Once you’ve found the metrics that matter, you can ask AI to generate a complete HTML dashboard with charts, filters, and KPIs. This isn’t a mockup - it’s a working interactive file you can open in a browser and share with your team.

The entire process from “here’s my CSV” to “here’s a dashboard” takes about 15 minutes. Not because the AI is slow, but because the human thinking - deciding which questions to ask, verifying the answers - takes time. And it should.

These are from teams who ran this workflow on their own data during our workshop sessions. The numbers and industries are real. Company names are changed.

A legal tech company had been running LinkedIn outbound for 12 months. 20,000 leads contacted. Their process: AI sends LinkedIn message → prospect responds → Slack notification to AE → AE sends booking link → prospect books a call → CRM tracking begins.

When they analyzed the data, the number that jumped out: 700 leads had responded with positive interest. But only 200 had actually booked a call and entered the CRM. That’s a 72% drop at the handoff point.

500 interested prospects just… disappeared. The AE was supposed to send a booking link after each positive response. But with new leads coming in every day, follow-ups on “old” positive responses kept getting deprioritized.

The fix wasn’t a coaching session. It was automation: if a positive response doesn’t result in a booking within 48 hours, the system sends an automatic reminder to the prospect and flags the AE. Remove the human from the handoff where possible. (We’ve seen similar patterns in how salespeople actually use AI - the biggest wins come from automating handoff points, not individual tasks.)

The insight only surfaced because they connected their outbound data (LinkedIn responses) with their CRM data (booked calls). Neither dataset alone told the story.

A biotech company was testing three different messaging strategies for their outbound:

• Strategy A (cost angle): “We’ll make it cheaper” → 33% response rate

• Strategy B (convenience angle): Lower response rate

• Strategy C (regulatory compliance angle): Lower response rate

On small sample sizes, the difference was already visible. AI confirmed statistical significance even with limited data - you don’t need to be a statistician to check this.

The decision: reallocate budget to 70% Strategy A, 15% each for B and C (you still want to keep testing).

A payment technology startup had four ICP segments they were testing:

Segment: Startup founders — Interest Rate: Low — Budget: Yes

Segment: Researchers — Interest Rate: High — Budget: Yes

Segment: Postdocs — Interest Rate: High — Budget: Often no

Segment: Accelerator grads — Interest Rate: Medium — Budget: Varies

The intuitive move was to target both researchers and postdocs, since both showed high interest. But the data showed a critical difference: postdocs often didn’t have purchasing authority or budget. Researchers did.

Even on small data, you can spot statistically significant outliers and make prioritization decisions. The key is to look at both interest and ability to buy.

This is the part most people skip. And it’s the part that will save your credibility.

AI-generated analytics look polished. They come with tables, charts, and confident language. That’s exactly what makes them dangerous - they feel trustworthy whether they’re right or wrong.

Pick three numbers from the AI’s output. Go back to the raw data and verify them manually. Open the CSV, filter to the relevant rows, and check the math yourself.

This catches column-selection errors (the zombie deals trap), date parsing mistakes, and filter logic problems. It takes five minutes and prevents presenting wrong numbers to your VP of Sales.

Ask the same question two different ways. Better yet, ask it in a completely new session where the AI doesn’t have context from previous answers.

“What’s Mike Johnson’s win rate?” and “Show me all of Mike Johnson’s closed deals and calculate what percentage were won” should give you the same number. If they don’t, dig into why.

When asking AI to calculate a metric, explicitly request that it shows the calculation steps. Not just “Sales Velocity = $X” but “Number of deals: 45, Average deal size: $52K, Win rate: 38%, Average cycle: 67 days, therefore velocity = (45 x 52000 x 0.38) / 67 = $13,284/day.”

This lets you catch assumption errors before they become presentation errors. Remember the zombie deals example - if you’d asked AI to show which column it used for “last activity,” you’d have caught the shortcut immediately.

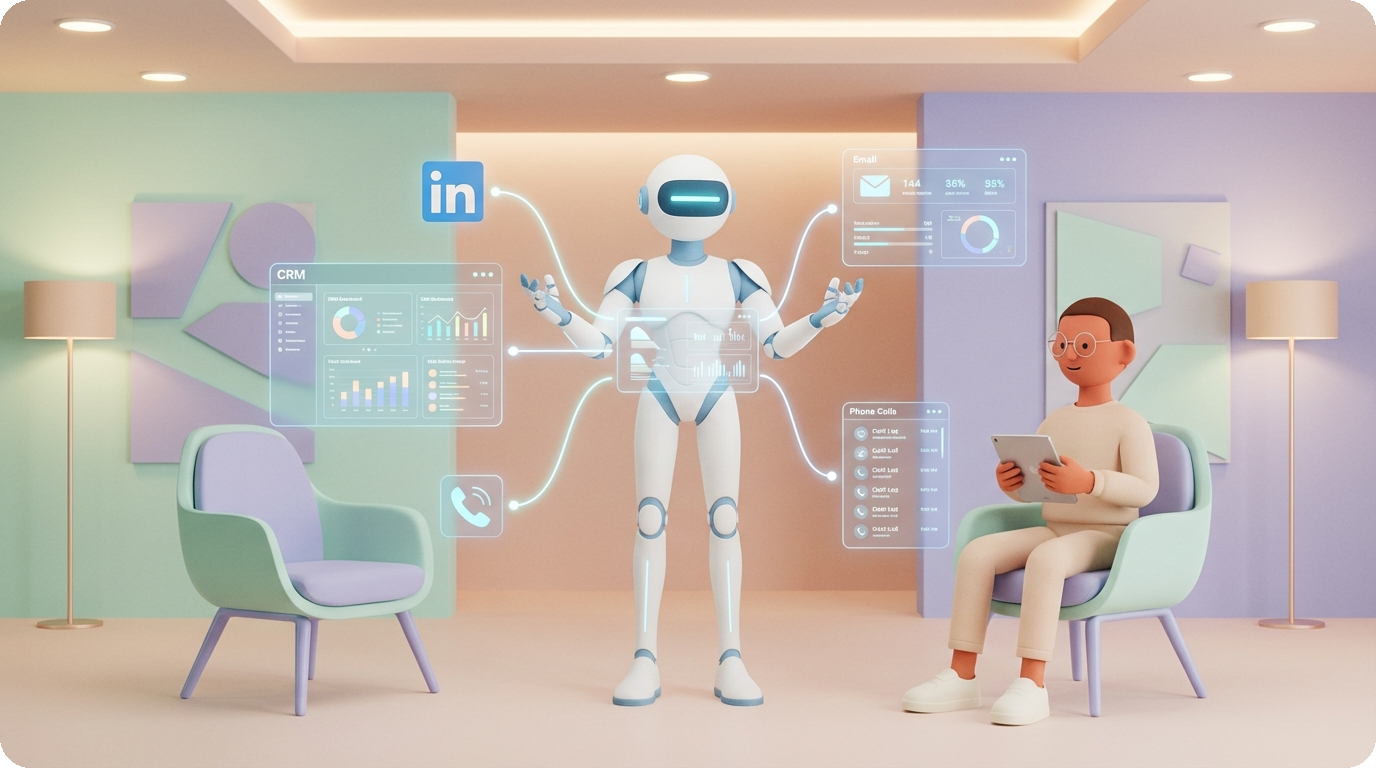

Single-dataset analysis is useful. Cross-dataset analysis is where you find the insights that actually change how you run your sales process.

When you combine data from different stages of your funnel - outbound metrics, lead scoring, call evaluations, and CRM outcomes - patterns emerge that no single dataset can reveal:

High LinkedIn acceptance rate + low response rate → Your targeting is right (people accept because you’re relevant), but your messaging is wrong (they don’t reply because the value prop doesn’t land). Fix the message, not the audience.

High SPIN scores + deal losses → Your reps are asking great discovery questions to the wrong people. The call execution is fine - the targeting is off. This is a lead quality problem, not a coaching problem.

Outbound closes faster than inbound → Counterintuitive, but we’ve seen this. It usually means your outbound targeting is more precise than your inbound qualification. The leads you choose to pursue are better-fit than the leads that find you.

High ICP match score + low discovery quality → You’re finding the right companies but fumbling the first conversation. Training investment should go to discovery call frameworks, not prospecting tools.

These cross-stream insights require connecting datasets that usually live in different tools. Your outbound platform, your CRM, your call recording software, your lead scoring system. The analysis itself is straightforward once the data is in one place. Getting it there is the hard part - and it’s exactly the kind of problem that AI sales tools with CRM connectors and data integrations solve well.

You don’t need a fancy tool to start. Export your CRM data to a CSV and try these:

1. “Calculate sales velocity by rep. Who has the highest velocity vs. highest revenue? Are they the same person?” - This alone will change how you think about your team.

2. “Find all deals open for more than 90 days with no activity in the last 30 days. What’s their total value?” - Your zombie deals are hiding revenue from your forecast.

3. “Compare win rates by lead source. Then compare average deal size and cycle length by source.” - The source with the highest win rate isn’t always the best ROI source.

4. “Show me the spread between my top 3 and bottom 3 reps on win rate, deal size, and cycle time.” - If the spread is large, process standardization will boost total revenue more than hiring another top performer.

5. “Cross-reference lead tier (Hot/Warm/Cold) with actual outcomes. What’s the false positive rate on Hot leads?” - This tells you whether your lead scoring is actually working or just giving you false confidence.

Each of these takes about 2 minutes with AI. Together, they give you a picture of your pipeline that most teams never see - even teams with dedicated RevOps analysts.

Can AI replace a data analyst for sales reporting?

For 70-80% of routine analysis - pipeline reviews, win rate breakdowns, forecast checks - yes. AI handles these in minutes instead of hours. Where you still need human judgment: defining the right metrics (velocity vs. revenue), interpreting cross-functional patterns, and making strategic decisions about what to change. Think of AI as replacing the spreadsheet work, not the strategic thinking.

What data do I need to get started?

A CRM export with these columns: deal ID, rep name, deal size, stage, created date, close date, and win/loss outcome. That’s enough for velocity analysis. Add lead source for ROI analysis. Add activity logs for zombie deal detection. The more data streams you connect, the more cross-stream patterns you can find - but start simple.

How accurate are AI-generated sales dashboards?

As accurate as your data and your verification. The calculations themselves are reliable - AI doesn’t make arithmetic errors. The risk is in which data it uses and how it interprets your question (the zombie deals trap). Follow the three verification rules - spot-check, cross-validate, show your work - and the accuracy is comparable to a junior analyst’s output.

What’s the difference between vibe analytics and traditional BI?

Traditional BI requires SQL knowledge, data modeling, and tool expertise (Tableau, Looker, Power BI). Vibe analytics - analyzing data through natural language conversation with AI - lets anyone with domain knowledge ask questions directly. The trade-off: traditional BI gives you reproducible, scheduled reports. Vibe analytics gives you fast, exploratory answers. Most teams benefit from both - AI for exploration and hypothesis testing, traditional BI for production dashboards.

How do I connect my CRM to AI for live analysis?

Most modern AI coding tools support MCP (Model Context Protocol) connectors that plug directly into HubSpot, Salesforce, and other CRMs. This means you can run the same analysis workflow - explore, analyze, dashboard - on live CRM data instead of CSV exports. The setup takes about 10 minutes for most CRMs.

Sales analytics doesn’t have to be complicated. One formula - Sales Velocity - covers most of what you need. AI handles the computation. Your job is three things:

1. Define the right metric. Don’t let AI default to the obvious answer. Give it velocity, not just revenue.

2. Verify the output. Spot-check, cross-validate, require shown work. Every time.

3. Connect the streams. The most valuable insights come from combining data that usually lives in separate systems.

The teams that get the most from AI analytics aren’t the ones with the most sophisticated tools. They’re the ones who ask better questions and check the answers before presenting them.

I’m Bayram, founder of Onsa.ai. We build AI agents that handle sales research, lead qualification, and pipeline analysis - so your team can focus on closing. This article is based on our AI Sales workshop where teams analyze real pipeline data with AI. Want to try it on your data? Get started with Onsa.ai.